Premature optimization kills a lot of projects, but keeping things running smoothly without spending extra money on hosting is important.

While I certainly don't want to think about scaling the MVP to support millions of users, I do think about what can reduce the load on my web and database servers.

Keeping things running smoothly is often much easier with smaller numbers of servers and services. If you can optimize the software to run on a single server it will mean you save time and money on setting up multiple servers and configuring them to work together.

As I was building Maker Network, I needed to calculate the number of people a user had made products with. This involves getting a list of products a user has worked with, getting all the makers of those products and aggregating the count so working with the same user doesn't more than once.

This is possible with a single SQL query, but with an every increasing number of users, and projects in the database, the query will continue to get more expensive and time consuming. Doing this on every page load for every user could put undue burden on the servers.

Caching

Most modern frameworks provide easy ways to cache any expensive operation.

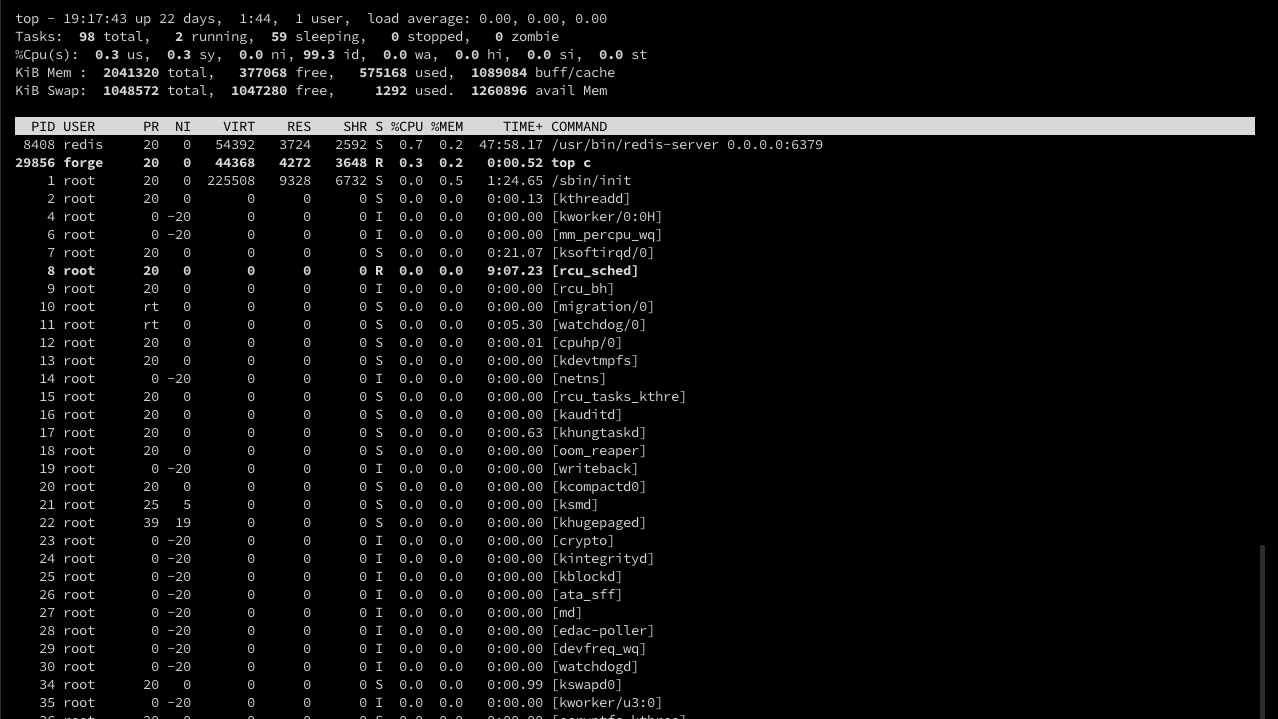

Laravel has very good support for caching, and works well with saving to disk, database or external servers like Redis or Memcached. Using Forge for server setup means they are already available on my servers.

They tricky part with caching is knowing how long to cache things for. If you don't cache for long enough, you'll actually end up adding load to the server. If you cache things for too long you can end up serving stale or inaccurate information.

You need to consider how often the underlying information will change and use your best guess as to a good time for things to live in the cache based on how expensive the operation is.

I typically take a guess erring on the sort side, then watch the load on the server to see if it feels good or needs to be longer.

Pre-calculating

Sometimes rather than caching we can pre-calculate any data we need.

In this example, I know the underlying information only changes when we update the data from Product Hunt, the products and their list of makers. Why not simply calculate this data at this point, and store it with the user record.

The nice thing about using caching is that we only spend time calculating information you need. Here we have to weigh the cost of pre-calculating the numbers for everyone in the database even if that data is never viewed.

In some cases the operation can be so expensive doing them in process of servicing a request would be out of the question and we would have to fall back to pre-calculating them.

In this instance that was not the case, but I did opt to do pre-calculating.

Why? I wanted to build a list of the top connected makers, and having the data pre-calculated for everyone made that very easy to build!

With some smart caching and a little pre-calculating, I'm able to keep the typical load levels on the server very low.